Why Your Enterprise Doesn't Need a Custom AI Model — It Needs Claude AI

A few months ago, a CTO told me his company had spent $400,000 building a custom language model for generating sales proposals. Eight months of development. A team of three ML engineers. The model worked, technically. But it could only do one thing, and every time their proposal template changed, they had to retrain it. I set up a Claude AI project with structured instructions and their existing templates in about two weeks. It handled the same task, plus six others they hadn't even asked for.

The custom model trap

I keep having the same conversation. A company decides they need AI. Someone (usually from engineering, sometimes a consultant) recommends building a custom solution. Fine-tune a model on your data. Train it on your documents. Build something proprietary.

It sounds right. Your business is unique, so your AI should be unique too. Right?

Here's what actually happens. The project takes six to twelve months. It costs $200K-$500K when you factor in ML engineers, data labeling, infrastructure, and the opportunity cost of everyone involved. The resulting model does one thing well. Maybe two. And the moment your business process changes (new pricing, new compliance requirement, new product line), someone has to retrain the model. More data. More engineering time. More money.

Meanwhile, Anthropic ships Claude AI updates every few months that make the base model better at everything, for free.

I'm not saying custom models are never the right call. But after deploying Claude AI into real business workflows for the past year, I can tell you the bar for “you actually need a custom model” is much higher than most companies think.

What I've learned from actual deployments

At Orient Printing & Packaging, I mapped 49 use cases across seven departments. Not hypotheticals. Real workflows that real people do every day: writing sales proposals, generating RFQs, troubleshooting machinery, drafting job descriptions, calculating payroll with Indian statutory compliance, analyzing vendor pricing, writing customer emails.

Every single one of those 49 use cases works with Claude AI. No fine-tuning. No custom model. No ML engineers. Just well-written instructions and the right knowledge files.

I was honestly surprised by some of them. The payroll calculations, for instance. PF, ESI, TDS, all the statutory deductions specific to Indian labor law. I assumed that would need something specialized. It didn't. Claude AI handles the math correctly when you give it the rules and the salary data. The key was structuring the instructions precisely, not training a new model.

That pattern held across all 49 use cases. The bottleneck was never the model. It was the instructions.

The 95% rule

After a year of doing this work, I've arrived at a rough heuristic. About 95% of the AI use cases that enterprises actually pursue can be handled by Claude AI with proper instruction engineering. The remaining 5% typically involve either real-time inference at massive scale (think millions of API calls per minute), highly specialized pattern recognition on proprietary data (like detecting manufacturing defects from images), or strict on-premise requirements where no data can leave the building.

Everything else? Claude AI can do it. And usually better than a custom solution, because Claude AI gets smarter every quarter while your custom model sits frozen at the moment you trained it.

Here's a quick breakdown of the use cases I've deployed:

| Use case | Custom model needed? | What Claude AI needs instead |

|---|---|---|

| Sales proposals and offers | No | Product specs + pricing rules + template |

| RFQ generation | No | Vendor list + requirements format + past RFQs |

| Customer email drafting | No | Brand voice guide + CRM context via MCP |

| Document summarization | No | Output format specification |

| Internal knowledge Q&A | No | Knowledge files + search via MCP |

| Troubleshooting guides | No | Technical manual as knowledge + decision tree |

| Job description writing | No | Role templates + company standards |

| Financial reporting | No | Report format + data access via MCP |

| Code review and assistance | No | Codebase context + style guide |

| Payroll calculations | No | Statutory rules + salary data |

| Translation and localization | No | Glossary + style preferences |

| Manufacturing defect detection | Yes | Specialized vision model needed |

| Real-time pricing at >1M req/min | Yes | Latency requirements too strict |

See the pattern? The “yes” cases are edge cases. The everyday work that actually consumes your team's hours? All Claude AI.

Why Claude AI specifically (and not just any LLM)

I get this question a lot. “Why not GPT-4? Why not Gemini? They can do the same things.”

They can do similar things. But after working with all three in production, I keep coming back to Claude AI for a few reasons that only show up when you're deploying at scale, not just playing around in a chat window.

Instruction following.This is the big one. When you give Claude AI a 2,000-word specification with edge cases, fallback behavior, and output formatting requirements, it follows them. Consistently. On the 500th run. I've tested the same complex instructions on GPT-4 and Gemini, and both drift more over long conversations and complex workflows. Claude AI stays on the rails.

Context window. Opus 4.6supports up to 1 million tokens. That's not a spec-sheet number. It means I can feed Claude AI an entire product catalogue, an entire compliance manual, and an entire customer history in a single conversation. Try doing that with a model that tops out at 128K tokens. You end up chunking, summarizing, and losing nuance.

MCP. This is the killer feature that nobody talks about enough. Model Context Protocolgives Claude AI a standardized way to connect to your business systems. Your ERP, your CRM, your databases, your file storage. No other major model has a native integration protocol at this level. With GPT-4, you're building custom API bridges. With Claude AI, you build an MCP server once and it works everywhere.

Safety by default.When I deploy AI into a manufacturing company's procurement workflow, I need to know the model won't hallucinate a vendor that doesn't exist or fabricate a price quote. Claude AI's training prioritizes honesty over helpfulness. It says “I don't know” instead of making something up. In a chatbot, that might feel unhelpful. In a production workflow handling real money? It's essential.

The real cost comparison

People fixate on API pricing when they compare AI options. That's the wrong number to look at. The real costs are development time, maintenance, and opportunity cost.

| Custom model | Claude AI deployment | |

|---|---|---|

| Time to first value | 6-12 months | 2-4 weeks |

| Upfront cost | $200K-$500K | $15K-$50K |

| Ongoing maintenance | ML engineer ($150K+/yr) | Instruction updates (hours/month) |

| Scope | 1-2 use cases | 10-20+ use cases |

| When process changes | Retrain the model (weeks) | Update instructions (hours) |

| Model improvements | You build them | Anthropic ships them free |

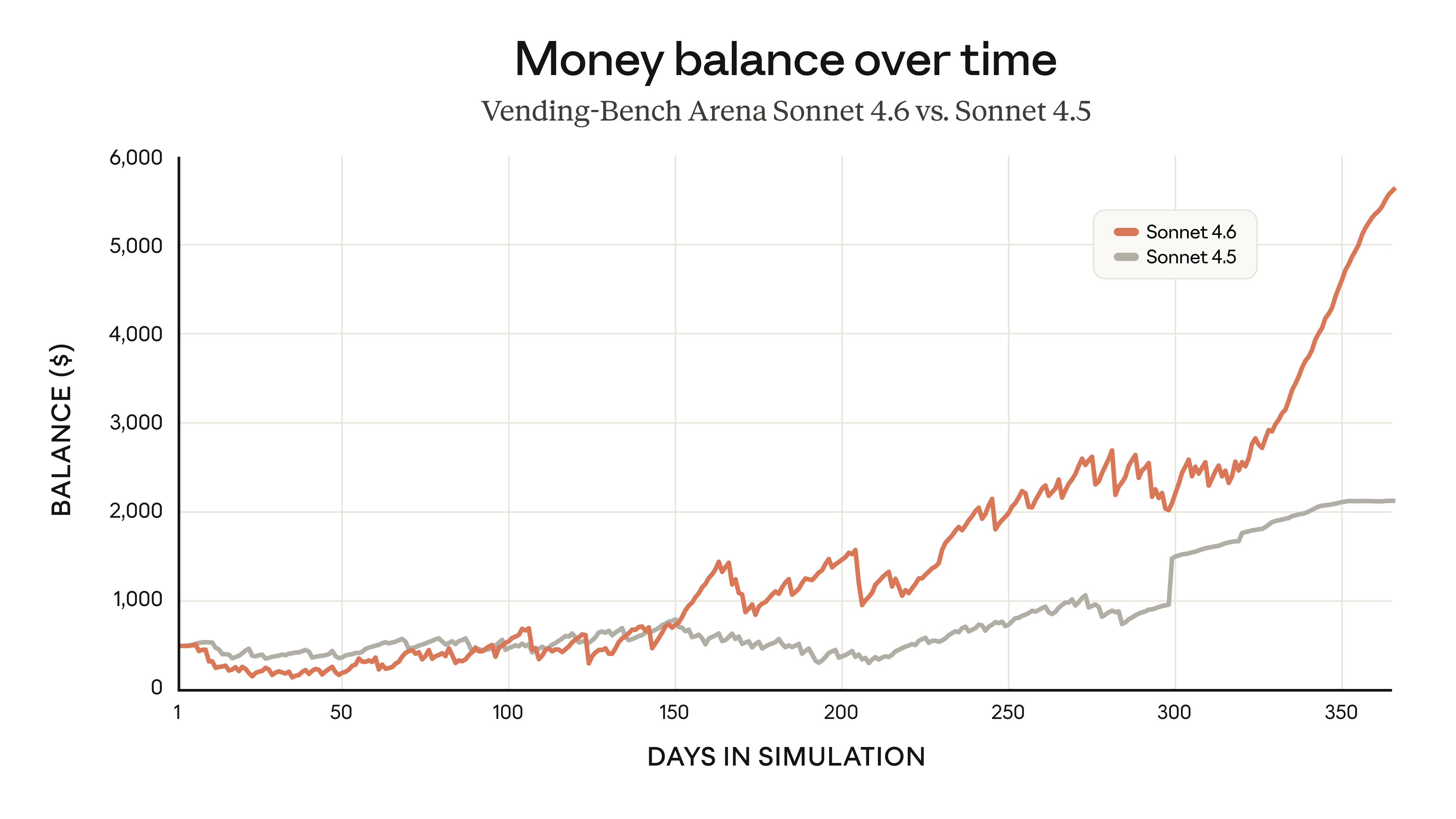

That last row is the one that matters most long-term. When Anthropic released Opus 4.6, every Claude AI deployment I'd ever built instantly got better. Better reasoning, longer context, more reliable tool use. My clients didn't have to do anything. They just woke up one morning with a smarter AI.

Try getting that with a custom model.

When you actually do need something custom

I want to be honest about the limits. There are cases where Claude AI isn't the answer.

Computer vision at scale.If you're running quality inspection on a manufacturing line and need to classify defects from camera images at thousands of frames per second, you need a specialized vision model trained on your specific product. Claude AI can analyze individual images, but it can't match the speed or accuracy of a purpose-built classifier for this kind of task.

Ultra-low latency.If your use case requires sub-50ms responses at millions of requests per minute (like real-time ad bidding or fraud detection), you need something smaller and faster than a large language model. Claude AI is fast, but it's not microsecond-fast.

Truly proprietary data patterns.If your competitive advantage is a pattern in your data that no general model could ever learn from public information (think drug discovery, genomics, or materials science), fine-tuning or training a custom model might be justified. But be honest with yourself about whether your data is actually that unique. Most business data isn't.

For everything else, the question isn't “can Claude AI do this?” It's “have I given Claude AI the right instructions and context to do this well?”

The instruction engineering gap

Here's the part that trips people up. They try Claude AI for a business task, get mediocre results, and conclude they need something more specialized. But the problem wasn't Claude AI. The problem was a two-sentence prompt trying to do the job of a two-page specification.

I've seen this dozens of times. A company tests Claude AI by typing “write me a sales proposal” into the chat. Claude AI writes something generic. They say “see, it doesn't know our products.” Of course it doesn't. You didn't tell it your products.

The same company, with structured instructions that specify the product catalogue, the pricing rules, the proposal format, the compliance requirements, and the customer's history? Claude AI produces proposals that their sales team actually uses. No fine-tuning required.

I wrote about this in detail in my guide to structuring Claude AI for business. The short version: it's a three-layer architecture. Reference knowledge (what Claude AI should know), capability workflows (what Claude AI should do), and MCP connectors (what Claude AI can reach). Get those three right and you don't need a custom model. You need a well-deployed one.

What I'd tell a CTO considering their options

If you're weighing custom AI versus Claude AI, here's how I'd think about it.

First, list your use cases. All of them. Not the flashy moonshot ones, but the boring everyday workflows that eat your team's hours. Document generation. Email drafting. Data analysis. Report compilation. Knowledge retrieval. That's where 80% of the value lives.

Second, try deploying Claude AI on your highest-volume use case with real instructions and real data. Not a quick chat test. A proper deployment with a knowledge file, structured instructions, and an output specification. Give it two weeks.

Third, measure the result. If Claude AI handles it well (and in my experience, it will), you just saved yourself $300K and six months. Scale to the next use case. And the next. And the next.

The custom model conversation should only start when you hit one of the genuine exceptions I listed above. Until then, you're not bottlenecked on model capability. You're bottlenecked on deployment.

And that's a much easier problem to solve.

If you want help figuring out where Claude AI fits in your organization, I'm happy to talk. Start a conversation →

Related

Founder of Settle. Deploys Claude AI into mid-market companies and manufacturers. Structured rollouts, production-grade instructions, real results.

Wondering if Claude AI can handle your use case?

Odds are it can. I've deployed Claude AI across manufacturing, procurement, HR, sales, and finance workflows. Happy to look at yours. Start a conversation →